Your CRM Is Lying to You. We Fixed 168,000 Records for $250. (Bespoke Data Enrichment at Scale)

Reading time: ~8 minutes · What you'll walk away with: a replicable framework for AI-powered CRM enrichment at scale, the exact cost breakdown, and a clear view of the competitive advantage hiding inside your own data.

Let me ask you something uncomfortable.

You have a CRM. You've probably spent tens of thousands, or maybe hundreds of thousands, building it, migrating data into it, training your team to use it. And right now, somewhere inside that CRM, there are records. Thousands of them. Maybe hundreds of thousands. And you have almost no idea who those companies actually are.

Not at a useful level. You know their names. You know their domains. You might have a phone number that no longer works. But industry? Sector? The type of business they run? Chances are that field is blank, wrong, or so generic it's useless.

This is not a criticism. It's an extremely common problem, and until very recently, it was also an extremely expensive one to solve. So most companies just… didn't.

One of our clients — a publicly traded industrial services company operating across more than ten countries — had 168,000 company records in HubSpot. Their investors were asking for an industry breakdown by NACE code — the European standard classification system for economic activity. Without it, they couldn't properly report, couldn't allocate marketing spend, couldn't tell their board a coherent story about who was buying from them.

The problem had been sitting there for years. And the reason it hadn't been solved? The cost was genuinely insane.

14,000

Hours of Manual Work Required

At 5 minutes per website × 168,000 accounts = 10.6 years of a full-time analyst's life. And that's before quality assurance.

The Uncomfortable Maths of Manual Data Work

Here's the equation nobody talks about openly.

If you want to classify 168,000 companies by industry, someone has to go to each website, read it, understand it, and apply the right code. That takes about five minutes per company if you know what you're doing. Five minutes is actually pretty fast.

Five minutes times 168,000 equals 14,000 hours. At a blended rate of £26 per hour, that's £364,000 in raw labour. Add project management, quality checks, a second pass for the tricky ones? You're north of £500,000.

And here's the thing: nobody was going to do that. It's not that the data wasn't valuable enough. It's that the cost-to-benefit calculation was broken. The task sat permanently in the "too hard" pile, which is exactly where most high-value data work lives.

The client had actually tried a workaround. One of their internal teams had a go with Perplexity, the AI research tool. It worked — technically. You could hand it a domain, it would come back with something useful.

The problem: it processed about 20 domains at a time. Credit limits hit fast. Progress was painful. It became clear very quickly that this was not a scaling strategy. It was a proof of concept wearing the clothes of a solution.

20

Domains at a Time (Perplexity Approach)

168,000 divided by 20, factoring in credit resets and manual review time — the timeline stretched into months. Nobody had months.

The Counterintuitive Solution: Don't Research. Batch.

When Periti came in to implement HubSpot across the business, this problem was baked into the scope. Our job wasn't just to set up a CRM. It was to make the data inside it actually mean something.

The insight that unlocked everything was simple — almost embarrassingly so once you see it.

The expensive part of classifying a company isn't the classification. It's the research. If you can eliminate the research bottleneck and feed clean, relevant text directly into an AI model, the classification becomes trivially fast and trivially cheap.

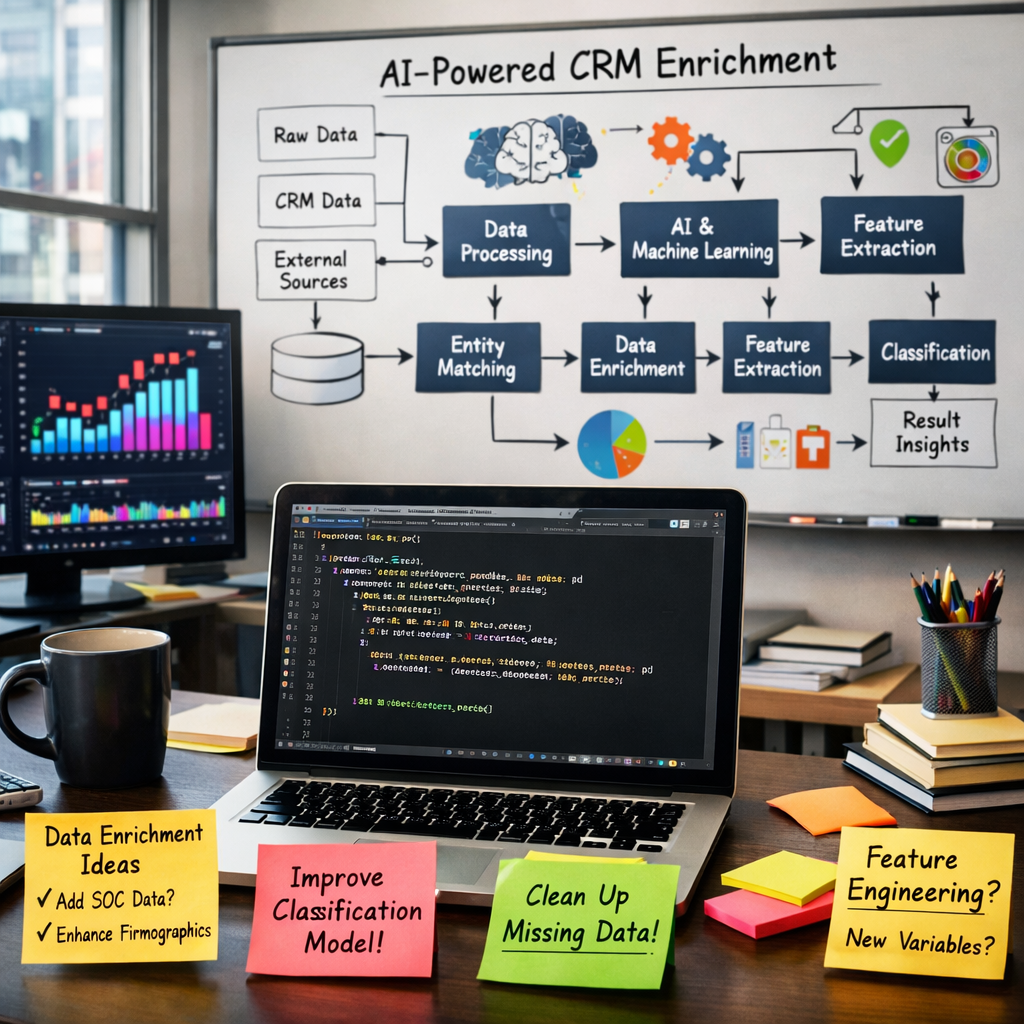

So that's what we built. A Python script that does three things at serious scale:

- Loads 100 domains at a time using Playwright — a browser automation tool that strips out all the visual noise from a website and hands you the raw text

- Condenses that text to a 1,000-character summary and stores it in a structured JSON file

- Feeds each summary into the Anthropic Claude API with a prompt asking for the best NACE classification, a confidence score, and a one-line rationale

That's it. No magic. No exotic infrastructure. A Python script, a browser automation library, and an API call.

The results were pushed straight into HubSpot via the API. All 168,000 records, enriched, classified, and tagged.

Why This Works When "Just Use ChatGPT" Doesn't

A fair question at this point is: why not just paste domains into Claude or ChatGPT and get classifications back?

The answer is time and money. If you used the standard Claude.ai interface — one domain at a time, manual copy-paste — each domain takes roughly 54 seconds. For 168,000 domains, that's 2,520 hours of continuous compute. About 105 days if you ran it non-stop. That's before you account for the fact that a human would have to be babysitting it the whole time.

The pipeline cuts that to roughly 48 hours of automated processing. No babysitting. No credit card sweats. Just a script running overnight.

$250

Total Anthropic API Cost

To classify 168,000 companies. That's approximately $0.0015 per company. Less than the cost of a single analyst's coffee break.

The Numbers. Let's Just Put Them Side by Side.

| The Old Way (Manual) | The New Way (AI Pipeline) | |

|---|---|---|

| Accounts | 168,000 | 168,000 |

| Time | 14,000 hrs / 10.6 years | ~48 hours |

| Labour cost | ~£364,000–£500,000 | €4,451 total |

| API cost | — | $250 (Anthropic) |

| Consulting hours | — | 25 hours |

| Auto-updates? | No | Yes — every new deal |

| Data confidence | Low / variable | High |

The total project cost was €4,451. That includes 25 hours of Periti consulting time and $250 in API fees. Everything else is rounding.

But Here's the Part Nobody Talks About: It Compounds.

A one-time enrichment of 168,000 records is valuable. What's more valuable is not having to do it again.

We built a HubSpot workflow using DataHub that triggers the exact same pipeline every time a new deal is associated with a company in HubSpot. The Python script fires automatically. The NACE code gets populated. The record is enriched before anyone has to think about it.

The data doesn't decay. The classification doesn't fall behind. You don't end up with a beautifully enriched database that's 40% out of date two years later because nobody budgeted for the second pass.

This matters enormously for a company operating across ten-plus countries with different regulatory environments, different market compositions, and different investor reporting requirements. The NACE classification isn't a one-time project. It's living infrastructure.

168,000

Enriched HubSpot Records

Every one now carries a NACE code, a confidence score, and an industry classification. And every new account gets the same treatment automatically.

The Real Prize: Proprietary Data Your Competitors Don't Have

Step back from the mechanics for a second and think about what this actually means competitively.

This company now knows, with high confidence, exactly which types of businesses buy industrial maintenance services. By country. By NACE sector. By sub-category. They can see which industries over-index, which geographies are underpenetrated, which sectors are growing as a share of their customer base.

Their competitors? Still guessing. Apollo and ZoomInfo don't cover micro-organisations well in European markets — which is exactly where this client's customer base lives. The data doesn't exist anywhere else in this form. It had to be built. And now it has been.

That's not a database. That's a strategic asset. The kind that shows up in investor presentations. The kind that informs M&A decisions. The kind that, if you're being honest, probably adds a measurable premium to enterprise value.

For €4,451 and 25 hours of consulting time.

What You Should Actually Take From This

Here are the five things I'd want you to leave with:

The bottleneck isn't classification. It's research.

Once you have clean text, AI classification is fast and cheap. The expensive part is getting that text. Playwright-style scraping solves this at scale.

Consumer AI tools are not enterprise AI tools.

ChatGPT and Claude.ai are brilliant for individual tasks. For 168,000 records, you need a pipeline, not a chat window. The API is a completely different product.

Batching is the unlock.

100 domains per batch versus 1 domain per conversation is not a 100x improvement. It's more like a 1,000x improvement once you factor in setup overhead per session.

Build it to run forever, not once.

A HubSpot workflow that triggers the pipeline on every new deal turns a project into infrastructure. The marginal cost of the 168,001st classification is effectively zero.

The data nobody else has is the data worth having.

Third-party enrichment tools are commodities. Everyone has access to the same Apollo and ZoomInfo data. Build your own classification layer and you have something competitors simply cannot buy.

One Last Thing

This is not a story about AI replacing analysts. The analysts still matter — for strategy, for interpretation, for the things that require genuine human judgment.

This is a story about a category of work that used to cost £500,000 and a decade now costing $250 and two days. That's not automation. That's a phase change. And it's happening right now, inside companies that are willing to ask the question: what would we do if the cost of knowing were effectively zero?

If you're sitting on a large CRM with inconsistent data — and almost everyone is — this is a solvable problem. The tools exist. The cost is trivial. The barrier is mostly imagination.

About Periti Digital

Periti Digital builds HubSpot systems and AI-powered data pipelines for businesses operating across multiple regions and markets. If your CRM has a data problem, we'd love to talk. peritidigital.com/contactus